What Is Model Collapse in AI? A Practical Guide for 2026

Why Model Collapse Is Now a Real Problem

Artificial intelligence is no longer limited by compute. It is limited by data quality.

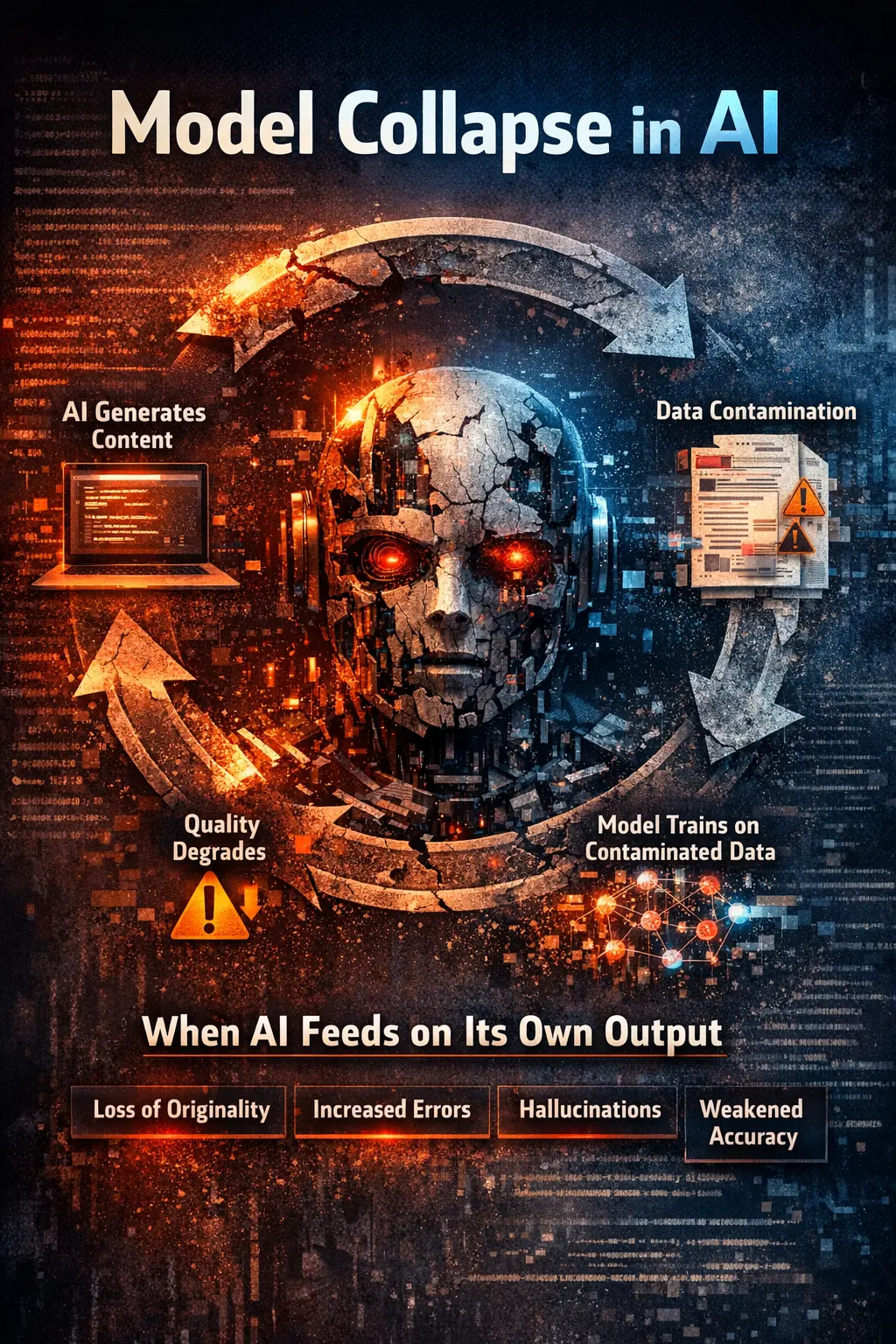

You now see a major shift. A large portion of online content is generated by AI. This content is reused, rewritten, and redistributed across platforms. When new models are trained on this data, they learn patterns that are already synthetic.

This creates a feedback loop. The result is Model Collapse.

What Is Model Collapse

Model Collapse happens when an AI system is trained on data that includes outputs from previous AI models.

Instead of learning from real human knowledge, the model learns from its own kind. Over time, this leads to:

- Loss of originality

- Reduced diversity

- Lower factual accuracy

- Increased hallucinations

In simple terms, the model starts drifting away from reality.

How Model Collapse Actually Happens

The process is simple but dangerous.

- AI generates content

- That content spreads online

- New datasets collect this content

- New models train on it

- Quality degrades

This loop repeats again and again. Each cycle reduces signal quality and increases noise.

Real-World Example

Imagine you train a model on 100 percent human-written data.

Now imagine the next version is trained on:

- 60 percent human data

- 40 percent AI-generated data

Then the next version:

- 40 percent human data

- 60 percent AI-generated data

Within a few cycles, the model is mostly learning from synthetic patterns. This is where collapse begins.

Key Symptoms of Model Collapse

- Repetitive answers across different queries

- Generic language with low uniqueness

- Weak reasoning and shallow insights

- Increased hallucinated facts

- Loss of domain depth

If your AI outputs start looking the same across topics, you are already seeing early-stage collapse.

Why This Is a Serious Risk

- AI Accuracy: Incorrect outputs increase over time

- Business Decisions: Bad data leads to bad decisions

- Research Quality: Scientific and analytical outputs degrade

- Trust in AI Systems: Users lose confidence when errors increase

If you ignore this problem, your models will slowly become unreliable.

The Root Cause

Lack of data verification and measurement

- How much data is synthetic

- Where the data comes from

- How many times it has been reused

Without this, contamination grows silently.

How to Prevent Model Collapse

You need a structured approach.

- Prioritize human-verified data

- Track data sources and origin

- Limit recursive reuse of AI content

- Maintain diversity in datasets

- Audit datasets before training

Most importantly, you need a way to measure contamination.

Where SDCI Fits In

This is where the Synthetic Data Contamination Index (SDCI) becomes important.

SDCI gives you a way to measure:

- Synthetic content ratio

- Recursive depth

- Data source confidence

- Language diversity

- Human knowledge presence

Instead of guessing, you get a clear score from 0 to 100. This allows you to detect risk early, improve dataset quality, and protect model performance.

Why This Matters in 2026 and Beyond

- More synthetic data

- More recursion

- Higher collapse risk

If you are building AI systems, you cannot ignore this. Data integrity will define success.

Final Thought

You are not just training models. You are shaping how machines understand reality.

If your data is weak, your model will fail. If your data is strong, your model will last.

Start thinking about data quality today. Because model collapse is not coming. It has already started.